Introduction

Artificial Intelligence is such a vast and huge beast with switching hype topics every couple of months. For someone who is coming into this world it can be overwhelming and discouraging. If you feel like this, don't worry, you are not alone. I do feel the same way every now and then. This feeling came to me just recently when I wanted to build a new workshop around AI Agents and Agent orchestration. Let's dive into some of my findings from my research.

NOTE: What this blog post is not -> A detailed introduction to every multi-agent orchestration framework. This blog post should give you a concise overview of the different frameworks so you can make a first decision on which framework you look into.

Where the heck do I get started?

I wrote my first agent with LangGraph, and you might ask why - Simply! It was the only one I was familiar with. Of course, I've heard about many other frameworks out there, but I stuck to what I worked with in the past. This is absolutely fine but probably not sufficient going forward with AI development.

The first challenge is understanding what's out there. There are so many different frameworks from different companies, and each framework seems to be the best solution for building intelligent agents. Some examples are LangGraph, Microsoft AutoGen, crewAI, Google ADK, IBM's BeeAI, and many more.

For me, I wanted to understand what the strengths, weaknesses, and use cases are for these frameworks. Asking the right questions should help narrow your options. Do you want to build a single autonomous agent that needs to reason through tasks? Do you need multiple specialized agents collaborating on a problem? Are you working within a set ecosystem like Microsoft, Google Cloud, or AWS? What programming language do you want to use for your agents?

What are AI agents?

Before we dive into the different frameworks and their specifics, let's talk about what an AI agent is. The basic idea behind an AI agent is that it is a piece of software that uses an LLM’s reasoning to decide when and how to interact with external tools to achieve a specific goal. LLMs are amazing at understanding natural language and producing natural language to react to given input prompts. If you look at LLMs, they are the brain of the operation, but we need a body to do the hard labor. This is where agents come into play. Agents allow the LLM to access different resources and tools to execute tasks, gather information, or trigger other agents to do some work with so-called agent workflows.

The LLMs can directly interact with an agent or multiple agents if they are known to the LLM. You can make agents known to an LLM through different types of tools like an agent-supported client or a cloud landscape which interfaces with the LLM. With agents, LLMs can now control application workflows. Agents might need to call certain tools, gather resources, or use different prompts based on the state. That is why we need frameworks to build this orchestration properly under a human-controlled environment.

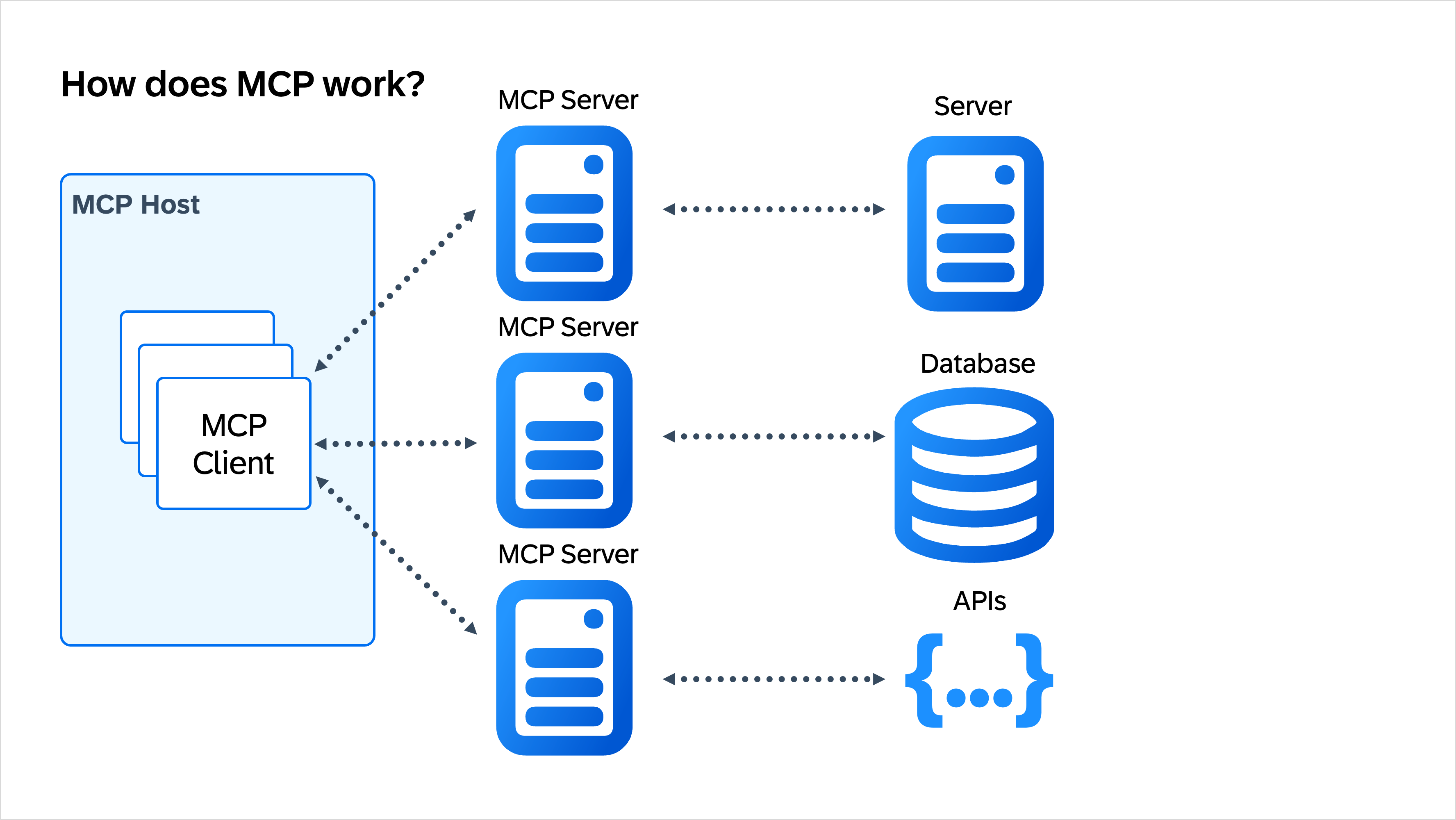

The agents can then access different tools, resources, or other agents to complete their task. In the past, this step could be cumbersome because there was no standard interfacing protocol defining how an agent would interact with the aforementioned parts. To solve this, Anthropic created a protocol to standardize the interfaces. The Model Context Protocol (MCP) was born.

MCP gives you a highly standardized protocol that allows you to create a clear interface implementation, giving the agents a set of functions to interact with. Because this protocol is standardized and highly adopted by the industry, there is a vast amount of MCP Servers (the implementation of the protocol) out there, ready to be consumed by your army of agents.

The diagram below gives a high-level overview on how MCP works:

What are the core capabilities of an agent framework?

Before we dive into the specifics of each framework, let's establish a common understanding of what the key capabilities are of such a framework.

The essence of every agent framework is:

1. Tool/Function/Resource Calling: The ability for agents to interact with external systems, APIs, databases, and other resources. 2. State & Memory Management: Keep track of conversation history, intermediate/final results, and context across multiple steps. This is especially important for complex agent workflows. 3. Orchestration: Providing a framework to coordinate the flow of tasks, whether it is multiple agents working together or a single agent's reasoning loop.

Every framework we'll discuss implements these capabilities differently, and understanding these differences is the key to choosing the right tool for your project.

How do LLMs interact with agents?

LLMs can interact with agents based on different patterns.

ReAct (Reasoning + Acting)

An LLM receives an input prompt and, based on the prompt, it reasons about what to do. Through reasoning, it decides on an action, what tool to execute, and observes the result to iterate over decision-making. This is the most used pattern in agent frameworks today.

1. Thought

2. Action

3. Observation

4. Thought

5. Action

6. repeat

Let's take a look at an example:

User sends prompt to LLM: "I need pricing on a new laptop." LLM: "Let me call the price catalog API to fetch pricing for a new laptop." Tool: "Replies with price." LLM: "I have an answer. The price is ..."

Function/Tool Calling

Function and tool calling is a subpart of ReAct as it describes the process of making different functions and tools available to the LLM through a framework.

The goal is to provide a list of available tools including descriptions to the LLM. The LLM can now make a reasoned decision on which tools to call. The surrounding framework (e.g., agent-supported LLM client, agent frameworks, etc.) executes the actions and returns the result to the LLM. The LLM uses the results to generate its final answer or re-iterate to another tool.

Most modern LLM clients already natively support this, e.g., GPT-4, Claude, Gemini.

Planning-Based

An LLM creates a complete plan upfront before it executes any actions. The plan contains the steps needed to accomplish the given goal. The LLM uses an agent to execute the plan step-by-step and can re-plan in case of failure or if the context changes.

Multi-Agent Orchestration

Multi-agent orchestration allows for multiple agents to communicate with each other through natural language messages. Tasks get delegated to agents through an LLM - "a manager LLM delegates tasks to specialized worker agents." The individual agents are specialized in what they are doing; they execute individually and pass on their result to the LLM or to the next agent. This pattern allows for multiple agents to work simultaneously, which is very powerful. In the end, the results are aggregated by the orchestrator.

Memory-Augmented

The memory-augmented pattern describes the usage of short-term and long-term memory for the LLMs to retrieve contextual information from. This information can be forwarded to an agent so that the agent learns from past interactions.

- Short-term memory: Conversation history in context window

- Long-term memory: Vector stores and databases

Reflection & Self-Critique

This is a crucial part of how LLMs work. The reasoning often includes reflection and self-critique in the LLM's generated output. The LLM reflects on the output, the given context, and checks for errors, inconsistencies, or missed requirements. This is an iterative process where the LLM refines its output.

A typical example is in vibe-coding. After generation of code, the LLM often tests and reviews the newly generated code. Based on the result, it tries to fix the caused errors.

Human-in-the-Loop

Often when working with an LLM, the LLM requests the input of a human to refine its context or to make a decision to move forward. For example: "Can I execute this terminal command?", "Should I create a concise PDF summarizing the result?", "Can I go ahead and book that flight for you?" This should always be utilized for high-stakes decisions or ambiguous situations. In LangGraph, this is solved by providing "interrupt" nodes in the workflow.

Summary

Looking at the different patterns, it is clear that there are three different ways an AI workflow can be driven:

- LLM-driven: The agent decides everything (autonomous)

- Framework-driven: There is a pre-defined workflow; the LLM fills in with reasoning at specific nodes

- Hybrid: The framework defines the structure, but the LLM makes the tactical decisions

Comparing major agent frameworks

LangGraph

LangGraph is being developed by the LangChain team. It is designed for building stateful, multi-agent applications. LangGraph provides you with a low-level orchestration framework and runtime. Its main purpose is agent orchestration, and it allows you to manage and deploy stateful agents. LangGraph is an MIT-licensed open-source library and is free to use.

LangGraph is designed for complex and enterprise-ready use cases. Through its low-level APIs, you have full control over the orchestration process.

As the name suggests, LangGraph orchestrates agents via a graph. The nodes can be agents or tools and the edges define their communication route.

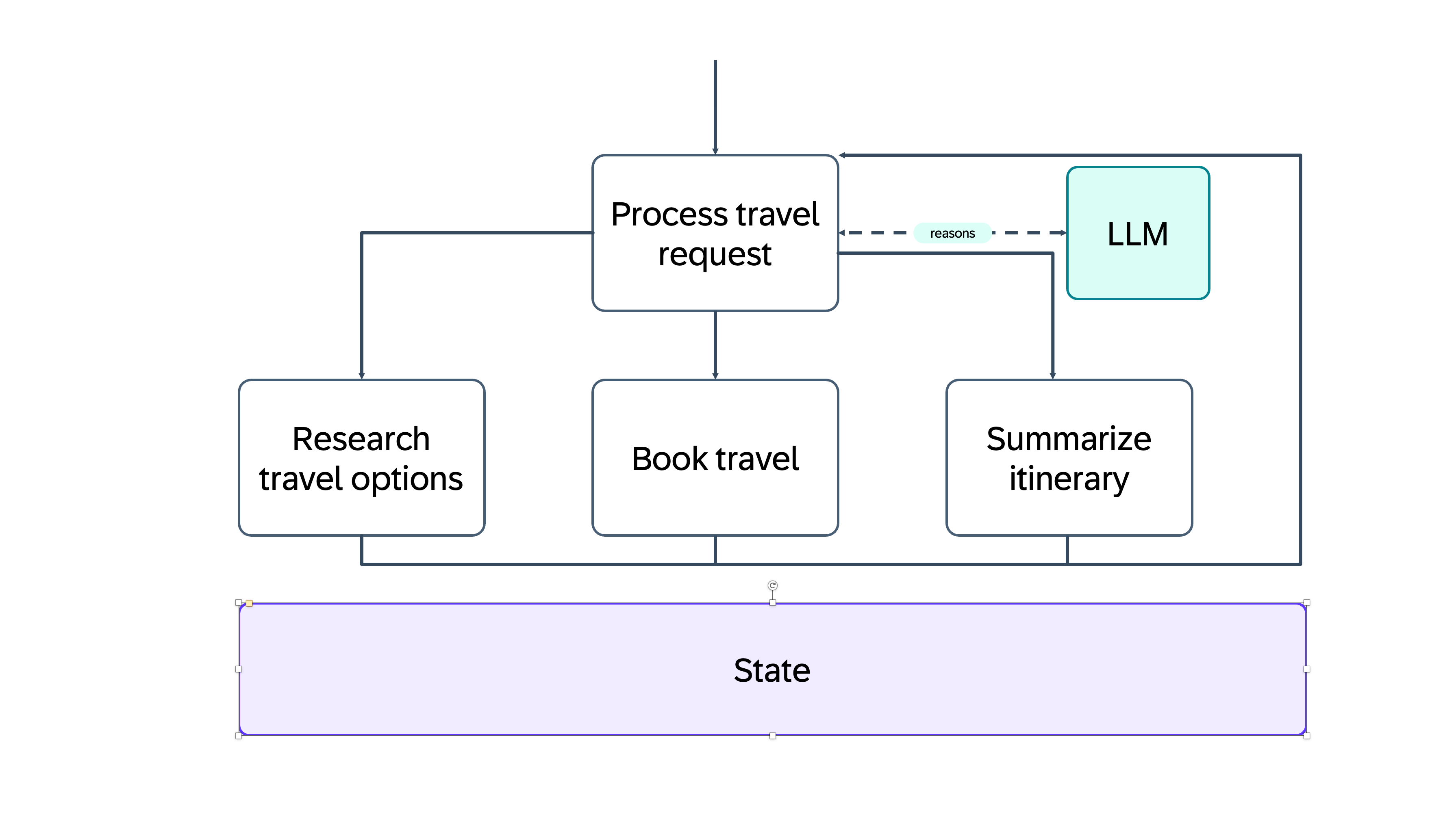

Let's take a look at an example to better understand what that means:

We assume we want to build an AI application that can research, book, and build an itinerary summarization for a business trip.

We define a new process that should process incoming travel requests. That process has three actions available: Research, Book, and Summarize.

Each of these actions is defined as a node. Behind each of these actions can be an agent performing the action. All nodes have access to the application's state; they can read and write into that state. The initial node that triggers the process uses an LLM to reason which node should be contacted. The lines in between are called edges, and they define the communication between the nodes. As you can see, the state is global within that process and available to all nodes.

Before we continue to the other frameworks, let's talk quickly about the difference between LangGraph and LangChain.

LangGraph is a framework built on top of LangChain. LangChain provides a broad array of APIs for working with LLMs. It provides you with tools, chains, memory management APIs, and the capability of building agents. LangGraph comes into play to connect these tools with each other. It helps you manage and orchestrate all the moving pieces, and it allows for building a multi-agent flow.

Capabilities:

- Stateful, graph-based agent orchestration

- Cycles and branching logic support

- Human-in-the-loop with interrupts

- Persistent checkpointing and time travel

- Multi-agent coordination

- Custom agent patterns

Programming Language Support: Python, JavaScript/TypeScript

Pros/Cons:

| Pros | Cons |

|---|---|

| High flexibility and powerful for complex workflows | Steep learning curve |

| Production-ready with strong state management | More verbose than simpler frameworks |

| Excellent debugging with LangSmith integration | Requires understanding of nodes, edges, state management |

| Active developer community | - |

crewAI

crewAI is an open-source multi-agent orchestration framework. It is solely Python-based and allows for AI agent collaboration within a "crew" to complete tasks. crewAI provides a solid framework for multi-agent workflow automation.

Every agent within a crew can autonomously work, delegate tasks, and ask questions within its crew. The AI agents within a crew are usually complementary and leverage existing or custom tools to complete assigned tasks.

Agents within crewAI can utilize any open-source LLM or API.

The important distinction from LangGraph is that each agent has a defined role assigned and should complete a pre-defined task. The workflow is usually sequential.

crewAI is not as low-level as LangGraph, and with that, you don't have all aspects of customization and fine-grained control over the orchestration of the agents. However, it is very powerful and serves a use-case-tailored approach by letting you create dedicated crews responsible for specific scenarios.

Capabilities:

- Role-based agent teams

- Sequential and hierarchical processes

- Task delegation and collaboration

- Built-in agent roles and tools

- Memory and context sharing

Programming Language Support: Python

Pros/Cons:

| Pros | Cons |

|---|---|

| Simple API for multi-agent systems | Less flexible than LangGraph |

| Intuitive role-based design | Limited control over agent interactions |

| Great documentation and examples | Relatively new with smaller ecosystem compared to LangGraph |

| Quick to get started | - |

| Good for business process automation | - |

Microsoft AutoGen

Microsoft AutoGen is an open-source framework for building AI agents and other AI applications. The focus of this framework is on autonomous agent conversations. The agents talk to each other without supervision, and they decide what they need to do next.

The AutoGen framework contains three layers with APIs that allow you to build your AI agent application.

AutoGen provides three different layers within its framework, each responsible for different abstractions when it comes to orchestration and multi-agent communication.

The core layer

The core layer provides the heart of the framework, it contains everything AutoGen needs to function.

"Core API implements message passing, event-driven agents, and local and distributed runtime" - Microsoft

This means the core API provides the means for the agents to talk to each other, provides eventing so the agents get triggered on certain events, and it has everything baked in to not only run locally but also across different server infrastructures.

The AgentChat Layer

The AgentChat layer allows you as the user to interact with the agents. These agents are generally called conversable agents. The layer also provides agents that help assist you as the user by reasoning without the user's involvement. This helps with the autonomous orchestration of the different agents.

The Extension Layer

The extension layer allows you to use default extensions by Microsoft or build your own custom extensions.

Capabilities:

- Multi-agent conversations

- Human/AI and AI/AI collaboration

- Built-in code execution

- Group chat patterns

- Automatic agent replies

- Custom conversation patterns

Programming Language Support: Python, .NET

Pros/Cons:

| Pros | Cons |

|---|---|

| Excellent for collaborative multi-agent scenarios | Can be unpredictable with multiple agents |

| Strong code execution capabilities | Less control over exact execution flow |

| Well-documented with many real-world examples | Resource-intensive with many agents through natural agent-to-agent conversations |

| Good for research and prototyping | Primarily research focused |

| Supports complex conversation flows | - |

Google Agent Development Kit (ADK)

Google's Agent Development Kit is the official framework from Google for building production-ready agents. The framework integrates seamlessly with Google Cloud services and Vertex AI.

Vertex AI is Google Cloud's unified machine learning platform that provides an extensive set of tools and services for building, deploying, and scaling AI applications.

The ADK has built-in support for Gemini models and tool calling. A major advantage of ADK is that it puts emphasis on scalability and enterprise readiness. The core features are agent orchestration, memory management, monitoring, and cloud-native deployment. The deployments can directly be monitored and observed through Google Cloud's toolset.

You might think that this sounds similar to LangGraph. Well, it comes down to the ecosystem. Google ADK is tied into Google Cloud and uses Gemini models. It is a managed, enterprise-ready platform, whereas LangGraph is an open framework that gives you maximum control and flexibility with the "downside" of doing a lot of the setup yourself.

Capabilities:

- Integration with Vertex AI

- Native Gemini model support

- Agent orchestration & memory management

- Cloud-native deployment

- Monitoring and observability through Google Cloud

Programming Language Support: Python, Java, Go

Pros/Cons:

| Pros | Cons |

|---|---|

| Tight integration with Google Cloud | Tied to the Google Cloud ecosystem |

| Access to Gemini models | May have vendor lock-in concerns |

| Enterprise scalability | - |

| Built-in deployment infrastructure | - |

| Strong production features for monitoring and observability | - |

LlamaIndex

The LlamaIndex framework is open-source and designed to connect LLMs with external data sources for document AI workflows and data-driven applications. With its focus on data integration, the framework provides powerful capabilities, giving you a lot of options for data ingestion. The framework's documentation states that it can support over 100 data sources like PDFs, databases, APIs, web pages, and more. The framework started as a Retrieval Augmented Generation (RAG) framework but has evolved into an agentic framework with a strong focus on document processing.

LlamaIndex is very good at indexing & retrieval of vectors, search by keyword, or processing knowledge graphs. The agents within the framework can query different data sources, they can combine multiple query engines as tools, and it is simply amazing at finding you the right information.

Some of the use cases can be:

- Document Q&A systems

- Knowledge base chatbots

- Research assistants across multiple documents

- Semantic search applications

Capabilities:

- Strong data ingestion capabilities

- Provides multiple indexing strategies

- Support of multiple query engines

- Agent integration for coordination between different tools and data sources

Programming Language Support: Python, TypeScript

Pros/Cons:

| Pros | Cons |

|---|---|

| Strong RAG capabilities | Focus lies on RAG capabilities rather than agentic AI |

| Extensive data connector ecosystem | Agent orchestration is not as sophisticated compared to dedicated agent frameworks |

| Strong community and documentation | Stronger in single-agent scenarios compared to multi-agent scenarios |

| LLM agnostic | If you don't need document processing, this is not for you |

| Easy integration with vector databases | - |

How to select the right framework for you?

┌──────────────────────────────────────────────┐

│ What are you primarily building? │

└───────────────────────────┬──────────────────┘

│

┌───────────────┼─────────────────────────┐

│ │ │

Knowledge / Data Agent Collaboration Workflow Orchestration

(Search, Q&A, RAG) (Agents work together) (State, branching, control)

│ │ │

┌────┴─────┐ ┌───┴─────┐ ┌───┴─────┐

│ │ │ │ │ │

Large docs? Semantic Simple team? Full control & Managed cloud?

Many sources? retrieval Roles & tasks? complex flows? GCP ecosystem?

│ │ │ │ │ │

YES YES YES NO YES YES

│ │ │ │ │ │

┌────┴────┐ │ ┌───┴────┐ ┌──┴───────┐ ┌───┴─────┐ ┌─┴──────────┐

│ Llama │ │ │ crewAI │ │ AutoGen │ │ Lang │ │ Google ADK │

│ Index │ │ │ │ │ │ │ Graph │ │ │

└─────────┘ │ └────────┘ └──────────┘ └─────────┘ └────────────┘

│

(Vector DBs, RAG,

document Q&A)

Wrapping up

When I started this research, I felt pretty overwhelmed by all the different agent frameworks out there. But after diving deep into each one, I've realized something important: there's no single "best" framework, just the right one for what you're trying to build.

Here's what I've learned: If you need complex workflows with full control over state and branching logic, LangGraph is your friend. It has a learning curve, but the power you get is worth it. If you want to quickly build a team of specialized agents without getting into the weeds, crewAI makes it super simple with its role-based approach. For projects where agents need to autonomously talk to each other and execute code, AutoGen is fantastic. If you're already on Google Cloud and working with Gemini, Google ADK gives you everything managed and ready to scale. And if your agents need to work with tons of documents and data, LlamaIndex is unbeatable.

The cool thing is that all these frameworks share the same fundamental concepts: tool calling, memory management, and orchestration. Once you understand these core ideas in one framework, switching to another becomes much easier.

Of course, there might also be an aspect of choosing a framework based on the programming language you prefer. But honestly, if there is one thing I've learned, it is that you must understand and learn Python. If you want to work with Artificial Intelligence technologies, there is no way around it.

My honest advice? Don't stress about picking the "perfect" framework. Start with what fits your current project and tech stack. I started with LangGraph because I already knew it, and that's totally fine! Build something, break it, learn the patterns. Those skills will transfer. If you are completely unsure what you want to do, you can always choose a learning playground to understand the concepts. One is SwarmAI, which allows you to build and work with multi-agent collaboration tools out of the box. This is a great no-code solution to learn.

This space is moving fast! New frameworks, protocols like MCP, better integrations, it's all happening now. But the fundamentals we've covered here will stick around. Understanding how agents work, how they communicate, and how to orchestrate them properly will hopefully help you in the future.

So pick a framework, start building, and don't be afraid to experiment. I'm still learning too, and honestly, that's the fun part. If you end up building something cool, I'd love to hear about it!

Happy coding, and welcome to the world of AI agents!